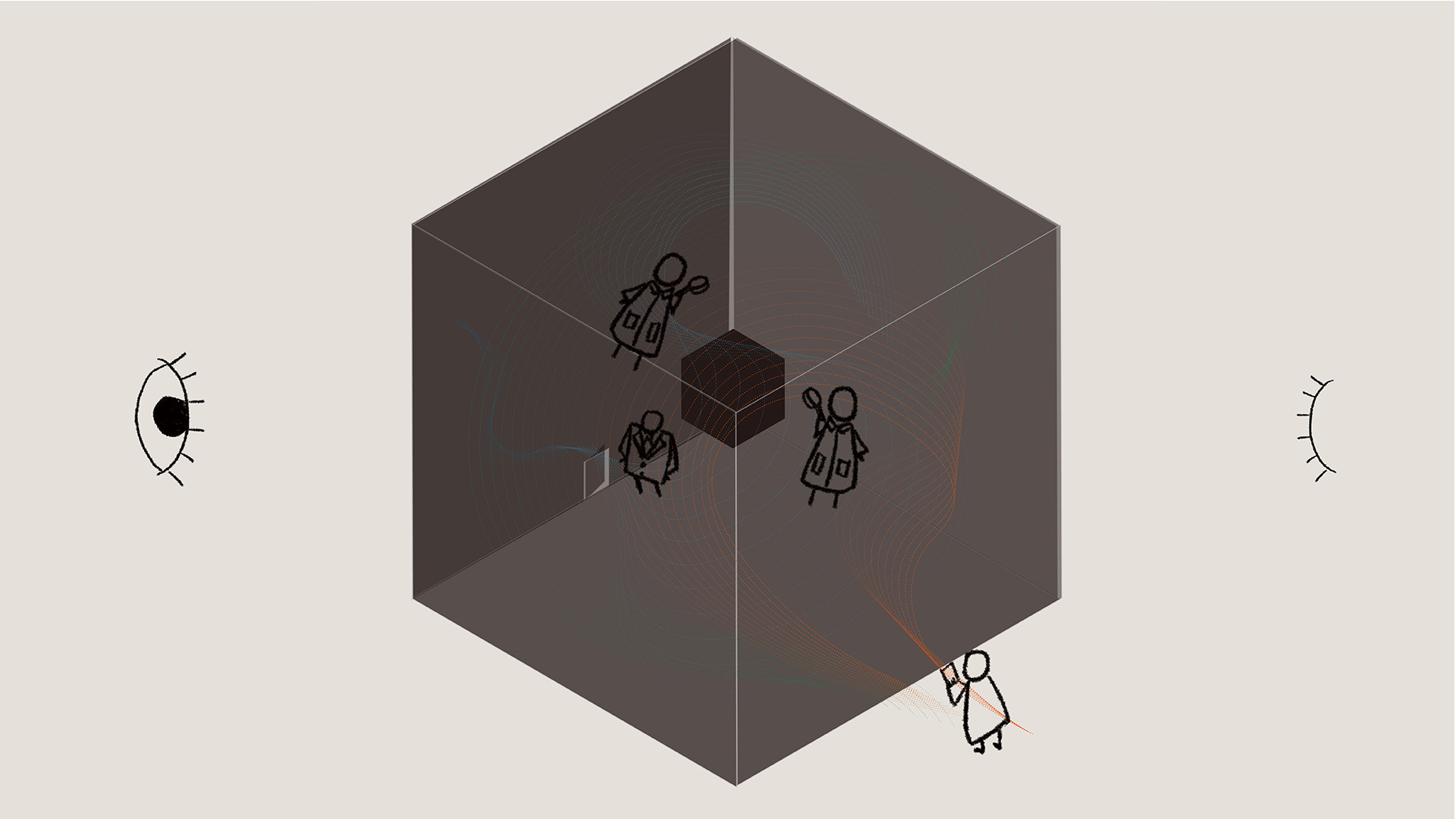

Imagine that the number of steps we walk, our heartbeat rate, blinks of our eyes, and fallen strands of our hair can be recorded, that even our emotions can be measured and memorized- all of these data are for AI to provide the ‘best’ personalized advice and services. Surveillance is everywhere. We may be more ‘naked’ and take more risks in the future digital world. The risks range from identity theft and misinformation to extremism and more. And when we know these smart services are developed for capital to make money by analyzing our data, do we have the ability to control the information we receive and trust whether it’s only for our benefit? The world of data is abstract and few people know how the digital world works. This is like being in a room with uni-directional perspective glasses, and raises a question: can people trust technology like big data, AI, and so on?

But now we all trust, or have to trust, the technology around us. Based mainly on the research ‘Trust and Distrust: New Relationships and Realities’ (Lewicki, McAllister& Bies, 1998), this project created an online technology trust self-test by presenting a series of interactive videos. This interesting test wanted to reveal how people were placing their trust in technology and try to draw audiences’ attention to this topic.

Self-test link: https://www.bilibili.com/video/BV1BM411B7oM